I got a chance to learn to use Istio, one of the most loved service mesh solutions, in a Kubernetes cluster at work. Before all this, I had no idea about what Istio is, or why service mesh is favored, but now I have clear answers to these and can confidently recommend introducing Istio into your Kubernetes cluster. It offers a variety of benefits, and in this article, I would like to share four advantages of Istio that I like most.

Before we begin: what is Istio?

In a microservices architecture, different parts of your application are broken down into separate, smaller services. These services communicate with each other to deliver the full functionality of the application. Service mesh refers to a dedicated infrastructure layer built into an application that controls service-to-service communication in a microservices architecture.

However, the communication between services is typically complex. It needs to be fast, reliable, and secure. It needs to handle service discovery, load balancing, failure recovery, metrics, and monitoring. It also often has more complex operational requirements, like A/B testing, canary releases, rate limiting, access control, and end-to-end authentication.

This is where Istio comes in. Istio is an open-source service mesh that layers transparently onto existing distributed applications. That means, you can leverage solutions to all the problems above without the need of modifying your application code!

Istio does so by injecting a sidecar proxy into each Pod. When you deploy a service in your Kubernetes cluster with Istio enabled, Istio automatically injects a proxy as a sidecar container within each Pod. This proxy intercepts all network traffic coming in and out of the Pod. Below is the architecture of Istio, taken from the official doc.

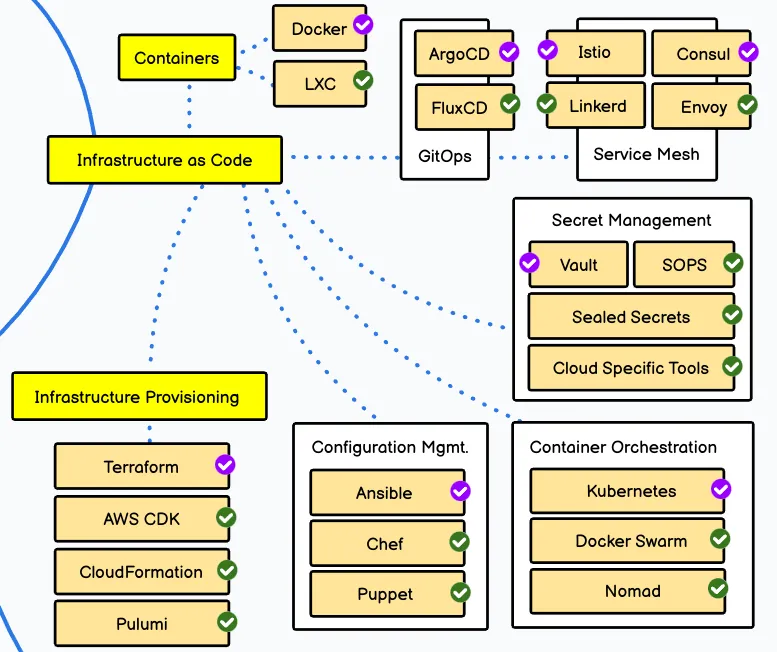

Istio and service mesh are listed on the DevOps Roadmap as key concepts to learn as DevOps Engineer.

Now, we know the basics of Istio, so let me describe four features of Istio I like most.

1. Traffic Management

Istio allows you to configure sophisticated traffic management strategies, including weighted routing and dark launches.

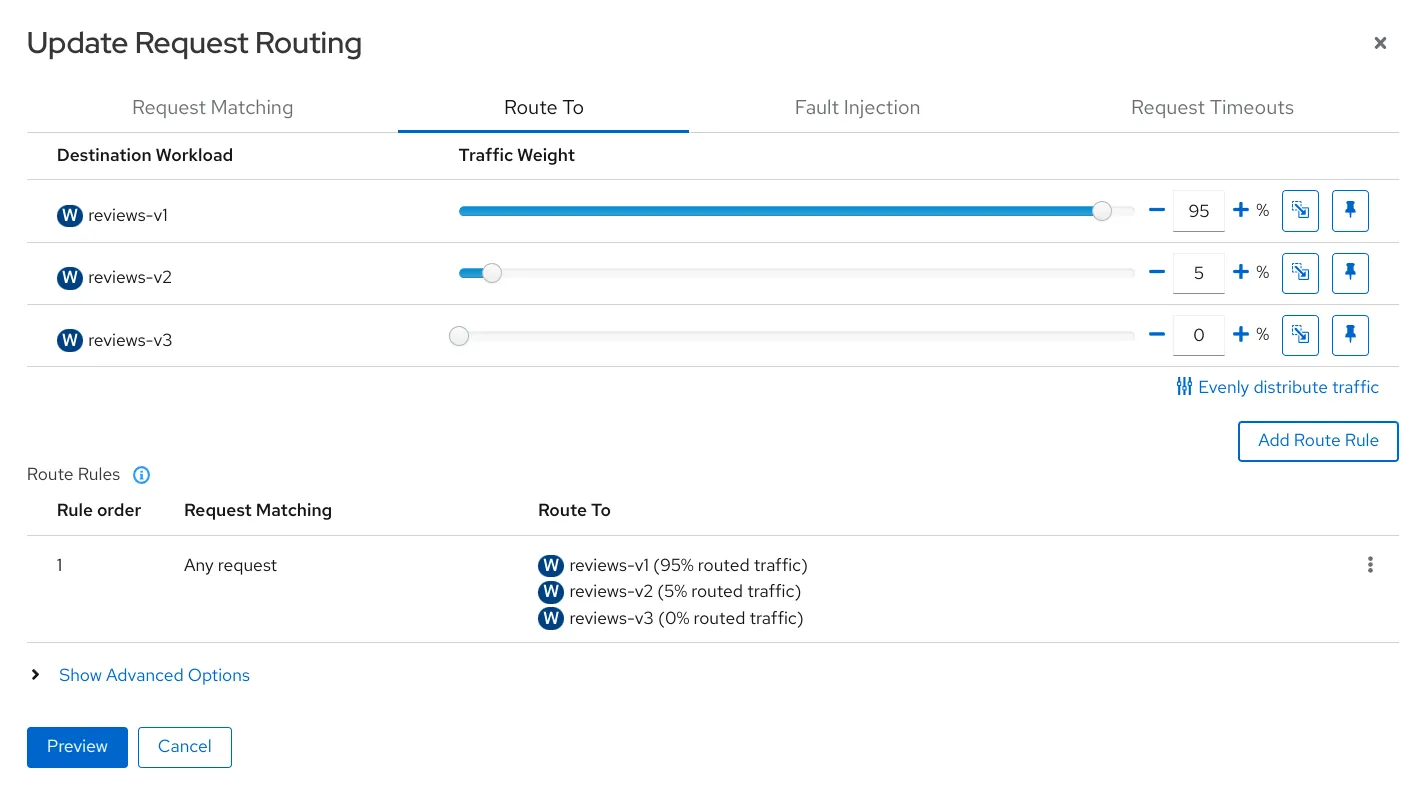

Weighted Routing (aka Canary Release)

This feature is particularly useful when you want to roll out a new version of a service. Instead of sending all traffic to the new version immediately, you can route a small percentage (say, 5%) of the traffic to the new version and gradually increase it. This approach, also known as a canary release, helps you validate the new version without disrupting all users.

You can directly create a YAML manifest or use Kiali to create routing rules from GUI and/or export it into YAML.

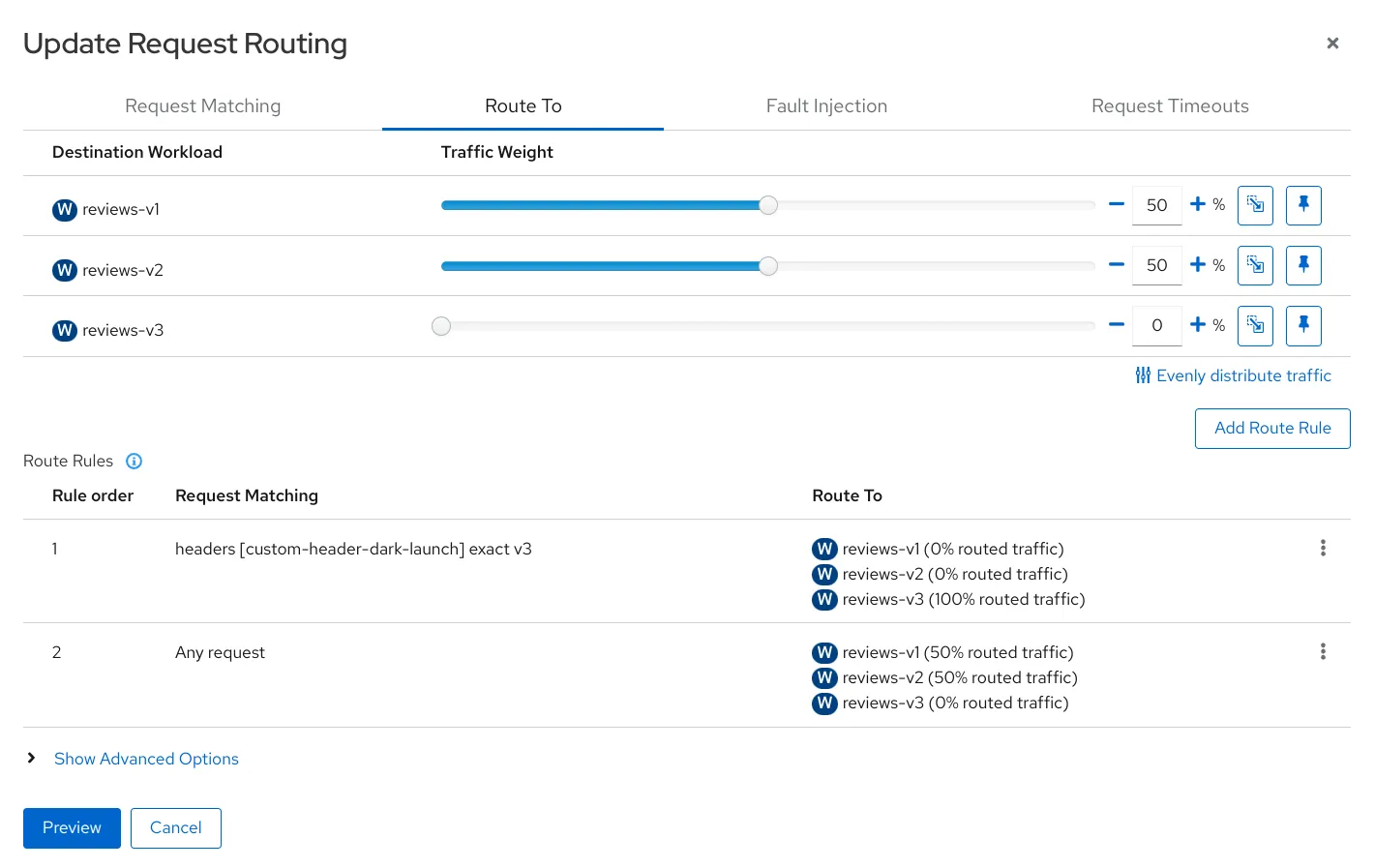

Dark Launches

This involves deploying a new feature or service into production and selectively exposing it to a subset of users to test its performance before a full-scale launch. With Istio, you can use request headers to define the rules for who gets to see the new feature, providing a powerful and flexible mechanism for dark launches.

The example below configures the requests that have header custom-header-dark-launch set to v3 are routed to reviews-v3 service.

I think dark launches are useful in that you can test your application at the production environment as well.

2. Observability

Istio can integrate with Prometheus and Jaeger to provide enhanced observability. Installation is very simple, and Istio provides example Kubernetes manifests on GitHub. All those observability tools work perfectly out of the box, excepting Jaeger. It is a distributed tracing tool, and for it to work, you need to propagate a few headers that distinguish request chains and spans.

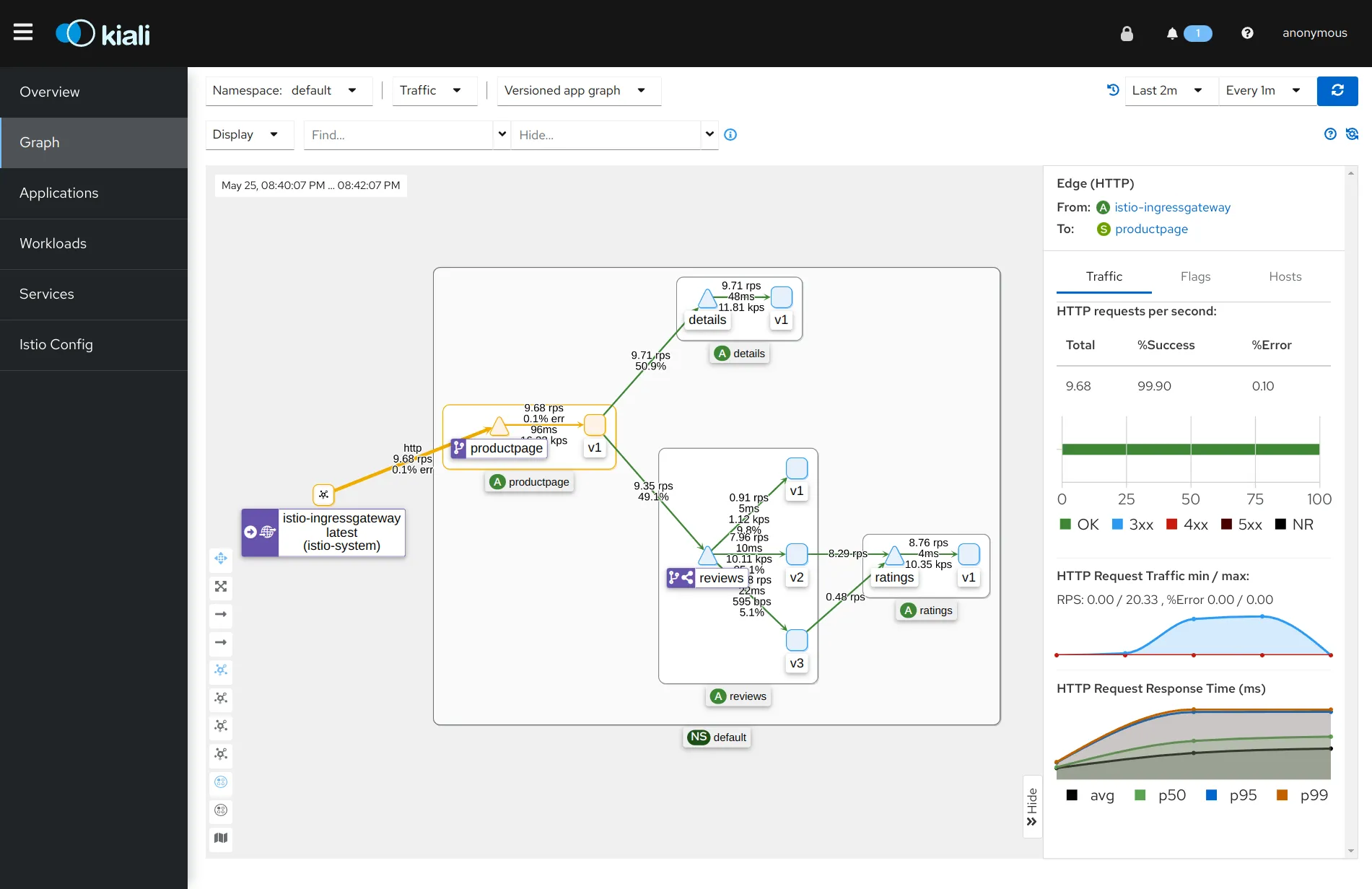

Kiali

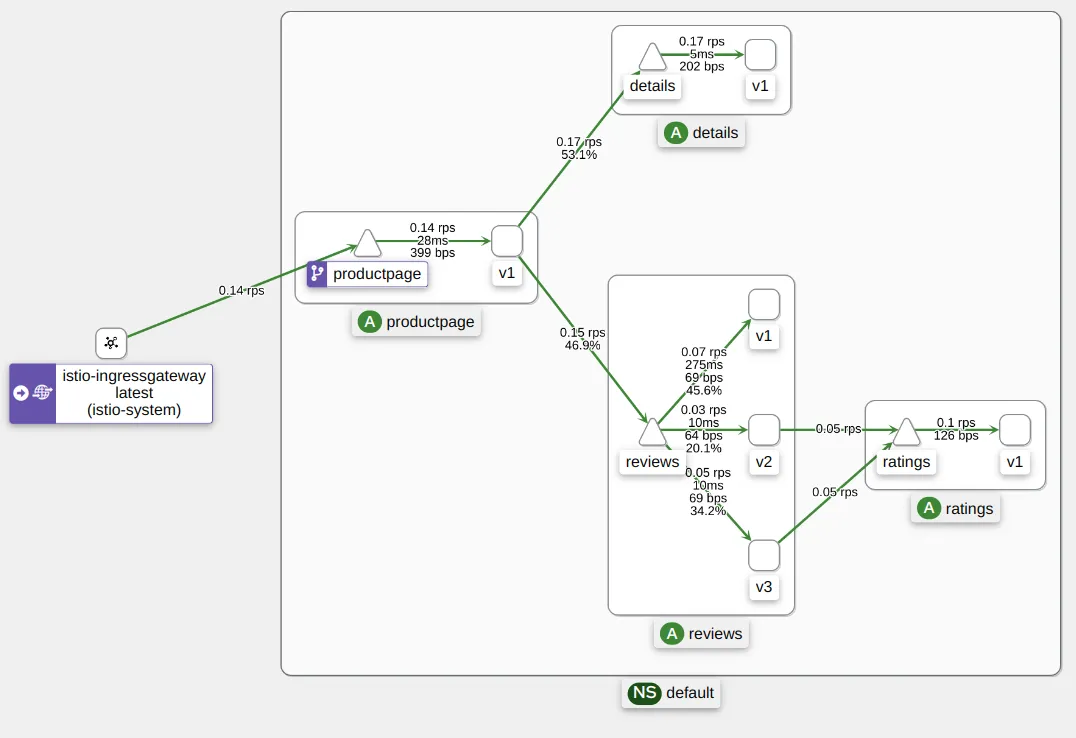

Kiali is a management console for Istio-based service mesh. It provides overview of your entire service mesh, and can validate your Istio configuration. It is very powerful especially when you join a new project. Kiali visualizes the entire Kubernetes cluster, what kind of services are deployed, how they connect with each other, etc.

Prometheus

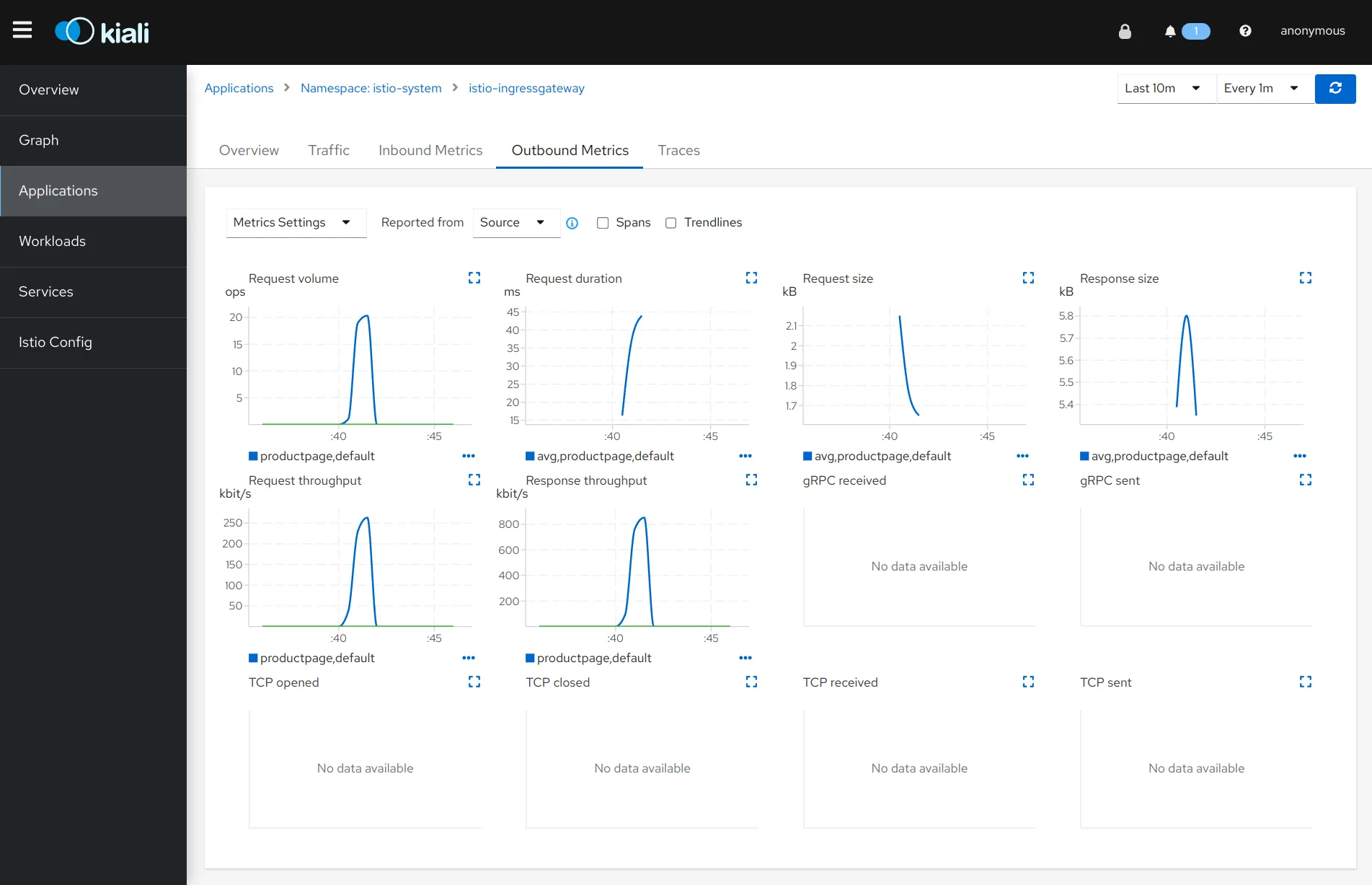

Istio exports metrics to Prometheus, a leading open-source monitoring solution. These metrics include request count, request duration, request size, and response size, allowing you to monitor the performance and health of your services effectively.

Please consult Istio Standard Metics for detailed metrics Istio provides.

Jaeger

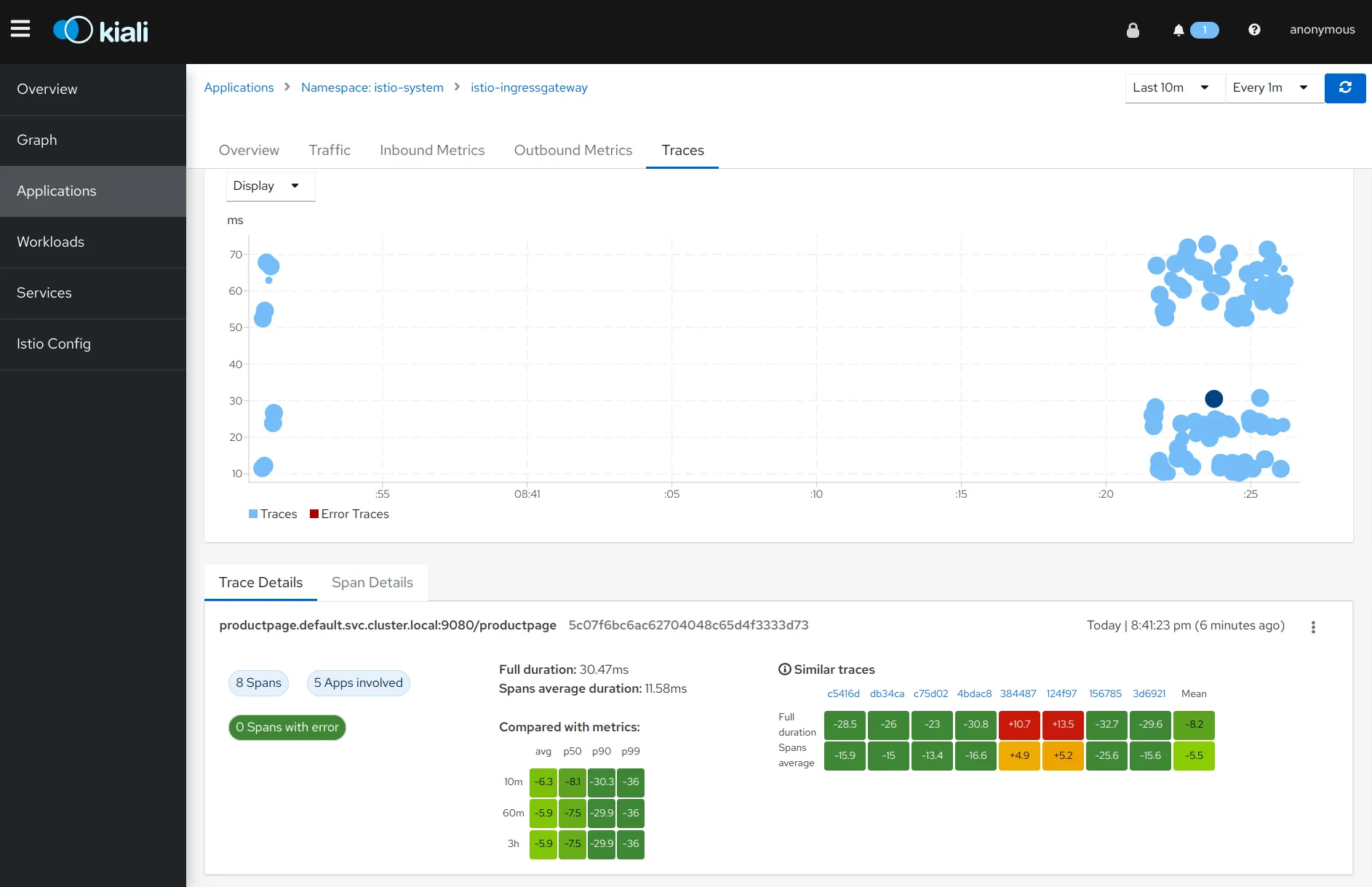

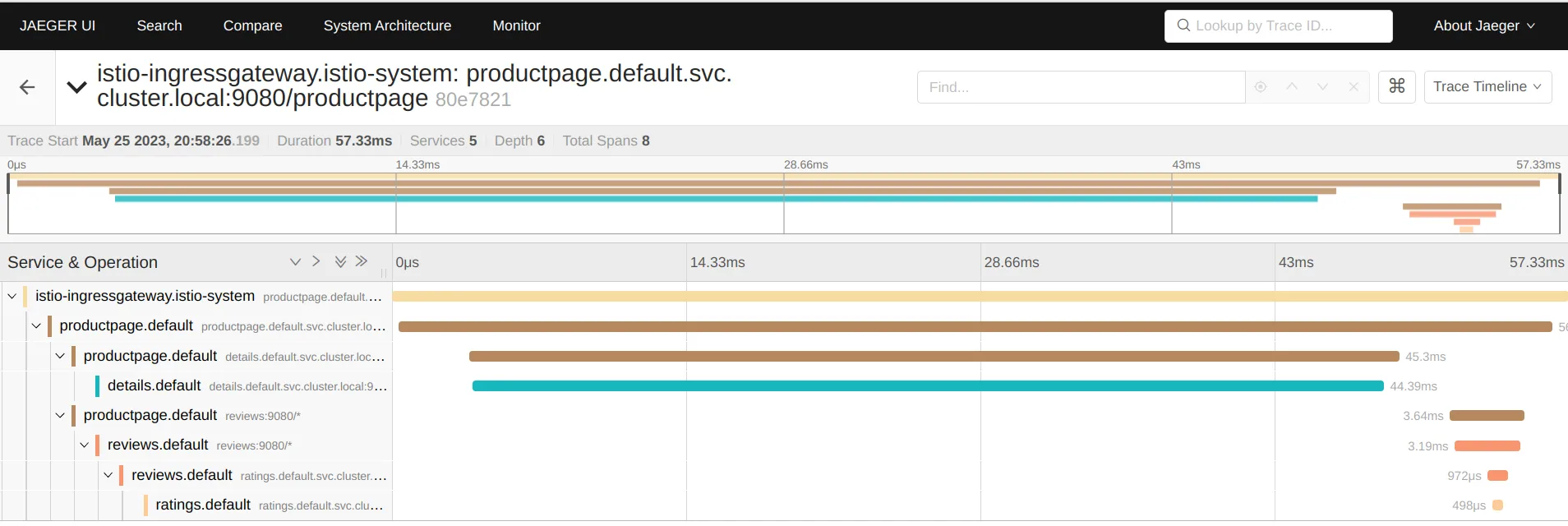

Istio also integrates with Jaeger, a distributed tracing system. By tracing requests as they traverse various services, Jaeger provides a detailed view of the interactions between services and the latency involved, helping you understand and diagnose performance issues.

For example, the image below shows that the majority of request processing time is consumed at the details service.

3. Security

Istio enhances the security of your services in several ways, including mutual TLS (mTLS).

Mutual TLS

Encrypting communication inside a Kubernetes cluster is generally considered good practice for enhancing the security posture of the cluster to protect against eavesdropping on potential traffic between nodes and to mitigate risks from intrusions.

Istio comes with mTLS by default, and all the traffic between Istio proxies are mutually authenticated and encrypted. Therefore, you can benefit from the increased security without modifying your application code to handle TLS.

4. Resillency

Istio includes several features to enhance the resiliency of your services, such as retries and circuit breakers.

Retries & Timeouts

Istio provides retries and timeouts for microservice architecutre without need for changing application codes.

When a service request fails, Istio can automatically retry the request. This increases the likelihood that the request will eventually succeed, improving the reliability of your services.

Istio also provides easy-to-configure timeouts for requests inside your mesh, with a few lines added to your VirtualService custom resource manifest, without changing any application code.

apiVersion: networking.istio.io/v1beta1

kind: VirtualService

metadata:

name: ratings

spec:

hosts:

- ratings

http:

- route:

- destination:

host: ratings

subset: v1

retries:

attempts: 3

perTryTimeout: 2s

retryOn: connect-failure,refused-stream

timeout: 10s

Circuit Breakers

In a distributed system, failures can cascade from one service to another, potentially leading to system-wide outages. Circuit breakers can prevent this by limiting the impact of failures. When a service starts failing, the circuit breaker "opens" to stop sending requests to the failing service. Once the service recovers, the circuit breaker "closes", and requests can reach the service again.

apiVersion: networking.istio.io/v1beta1

kind: DestinationRule

metadata:

name: httpbin

spec:

host: httpbin

trafficPolicy:

outlierDetection:

consecutive5xxErrors: 2

interval: 1s

baseEjectionTime: 3m

maxEjectionPercent: 100

In the above example, trafficPolicy declares the behavior of the circuit breaker applied to httpbin service.

consecutive5xxErrors: 2: This means that if a service instance returns two consecutive 5xx errors, it will be ejected from the load balancing pool.

interval: 1s: This is the time interval between ejection sweep analysis. Here it's set to one second. In other words, Istio checks the health status of the service every second.

baseEjectionTime: 3m: This is the minimum ejection duration. A host will remain ejected for a period equal to the product of the number of times the host has been ejected over the last baseEjectionTime multiplied by the baseEjectionTime. In this case, it's set to 3 minutes.

maxEjectionPercent: 100: This is the maximum percentage of hosts in the load balancing pool for the service that can be ejected. The value is set to 100, which means all hosts can be ejected.

Summary

In this article, I shared four advantages of Istio, traffic management, observability, security, and resiliency. I believe they motivated you to learn more about Istio, and perhaps to introduce it into your Kubernetes cluster. Below are the references that helped a lot to learn about Istio.

References

- Udemy: Istio Hands-On for Kubernetes (highly recommended)

- Getting Started to Istio